- Blog

- Scarecrow batman arkham knight

- Flexi 12 freezes

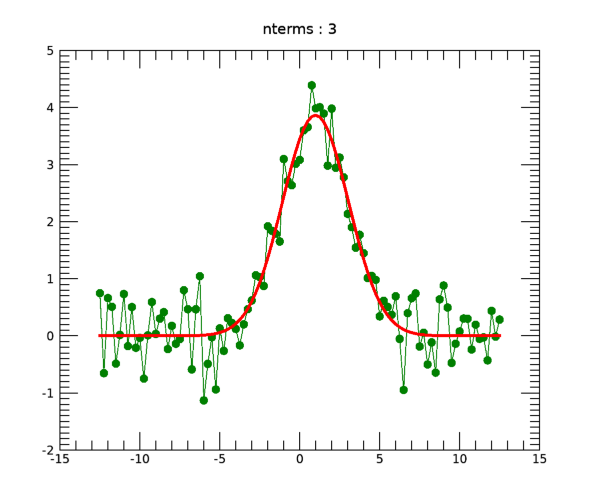

- Idl gaussian

- Remove alt enter excel

- Check printing printer

- How does the viewfinder anime end

- Need for speed most wanted ps2 action replay codes

- Adobe acrobat 9 for mac

- Lectra modaris install lisence files checkbox

- Generic usb hub driver windows 7 ultimate

- Best deck to use in arena 6

- Stellar speedup mac torrent

(2008) used a corrected Akaike information criterion, which are both different from our approach. (2006) used a Bayesian approach while Marrel et al. However, only a few works about the variable selection in GPRM have been done ( Linkletter et al., 2006 Marrel et al., 2008 Welch et al., 1992), and almost all previous researches employed simple GPRM withou a variable and parameter selection. The same reasoning and approach can be applicable to GPRM. Therefore, for the building of prediction model, it is important to find best subset of independent variables which gives good fit ( Jung and Park, 2015 Lee, 2015). The reason why is because over-fitting can lead to a significant variance of predicts and under-fitting can lead to a bias of predicts. In classical regression, when there are many independent variables, we select the subset of independent variables which gives good fit. However, many of these researches have not applied a systematic model selection method, which has motivated the current work. (2010), Rohmer and Foerster (2011), Deng et al. (2008), Caballero and Grossmann (2008), and Liu et al. (2004)Ĭomputer and system engineering: Kennedy et al. Health economics: Rojnik and Naveršnik (2008), and Stevenson et al. Mechanical engineering: Slonski (2011), Lee and Gard (2014), and Dubourg et al. A few examples of recent works that used GPRM for the modeling of computer simulation data are as follows: The GPRM has often been successfully used in the past for the modeling of computer simulation data. (1989) suggested adopting a Gaussian process regression model (GPRM) as a metamodel for the computer simulation code.

#Idl gaussian code

Typically, a computer code is deterministic or it has a small measurement error. However, one can use a statistical model as a metamodel to approximate a functional relationship between the input variables and response values of a computer simulation instead of the simulation code itself. In these cases, computer simulation codes can be computationally expensive therefore, it can be impossible to directly use a computer simulation code for the design and analysis of computer experiment (DACE), because it needs to run many computer simulation codes for the optimization of objective functions. In addition, computer codes often have high dimensional inputs. The development of computer technology has enabled researchers to replace a physical experiment using complex computer simulation codes. Keywords : Bayesian information criterion, best linear unbiased prediction, covariance matrix, Kriging, maximum likelihood estimation, metamodel, numerical optimization We illustrated the superiority of our proposed models over the Welch method and non-selection models using four test functions and one real data example. During this process, the fixed were covariance parameters ( θ’s) that were pre-selected by the Welch algorithm. The proposed algorithms select some non-zero regression coefficients ( β’s) using forward and backward methods along with the Lasso guided approach. It is a post-work of the algorithm that includes the Welch method suggested by previous researchers. In this paper, we propose a new algorithm to build a good prediction model among some GPRMs. However, only a few works on the variable selection in GPRM were done.

One reason to select some variables in the prediction aspect is to prevent the over-fitting or under-fitting to data. Selecting a subset of variables or building a good reduced model in classical regression is an important process to identify variables influential to responses and for further analysis such as prediction or classification. The model in our approach assumes that computer responses are a realization of a Gaussian processes superimposed on a regression model called a Gaussian process regression model (GPRM).